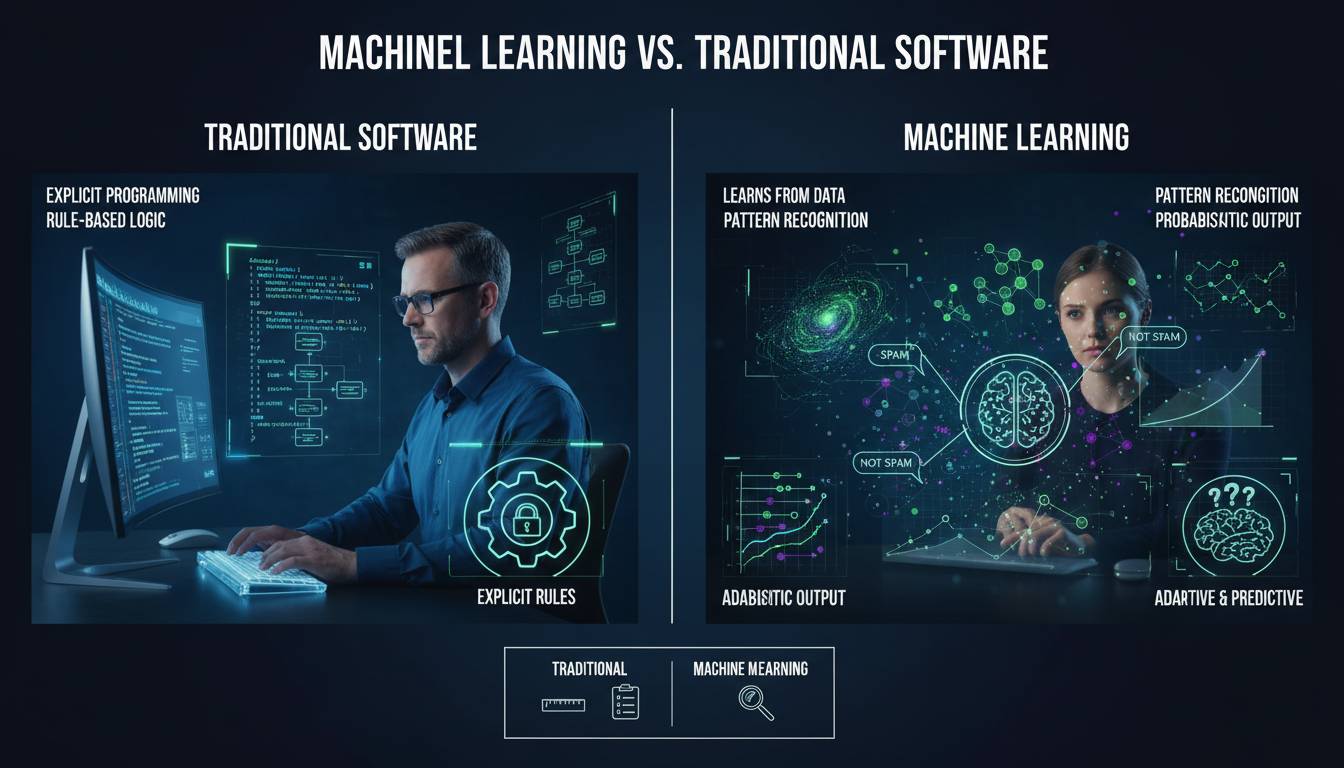

The distinction isn’t just technical — it’s philosophical. Traditional software tells a computer exactly what to do, step by step, like following a recipe. Machine learning, by contrast, shows a computer thousands of examples and lets it figure out the recipe itself. That difference affects everything: how you build software, what problems you can solve, and where human judgment fits in. If you’re evaluating whether to build something with traditional code or train a model, the answer is usually clear once you understand what’s actually happening under the hood.

This guide breaks down those differences with concrete examples and a framework you can use when making real decisions.

The Fundamental Difference: Rules Versus Data

Traditional software operates on explicit programming. Every behavior, every decision branch, every edge case gets coded by a human who understands the rules. You write “if the user’s age is greater than 18, grant access” and the computer follows that exactly, forever, until someone changes the code.

Machine learning flips this. Instead of programming rules, you program the learning process. You show the system thousands of examples — labeled data showing what correct outputs look like — and the algorithm discovers patterns you’d be hard-pressed to write as explicit rules.

Consider spam detection. In traditional software, you’d write rules like “if the email contains ‘free money’ and ‘act now,’ mark as spam.” This works until spammers change their wording to “fr33 mon3y” or “do it now.” You spend years playing whack-a-mole with edge cases.

A machine learning approach trains on millions of emails labeled as spam or not spam. The model learns that certain word combinations, sender characteristics, and metadata patterns correlate with spam — patterns that would take a human programmer decades to enumerate. It also catches variations a human never explicitly coded for.

This is the core difference: traditional software encodes what you know, machine learning discovers what you don’t know you know.

How Traditional Software Actually Works

Traditional software development follows a predictable lifecycle. You gather requirements, write specifications, implement logic, test against known cases, and deploy. The knowledge lives in the code itself, explicitly written by developers who understand the domain.

This approach excels when problems have clear, enumerable rules. Financial calculators, inventory management systems, text editors, and payroll processing all follow definable logic. When something breaks, you can trace through the code and find exactly where the behavior diverged from expectations.

The limitations become apparent in three scenarios. First, when the rules are too complex to specify — image recognition, where explaining to a computer what a cat looks like in pixel-by-pixel terms proves impossible. Second, when the rules change constantly — fraud detection, where attackers continuously adapt. Third, when the problem requires judgment in ambiguous situations — predicting whether a loan applicant will default involves factors that resist clean logical expression.

The maintenance burden in traditional software grows with complexity. Every new edge case requires new code. Every rule change requires developer intervention. The system is only as good as the programmer’s ability to anticipate every situation.

How Machine Learning Works

Machine learning shifts the burden from programming solutions to programming the learning process. You don’t tell the computer what a spam email looks like. You show it millions of emails and let it identify patterns.

The workflow looks different. You gather and label data — often the most expensive and time-consuming step. You split data into training and test sets. You select an algorithm appropriate for your problem type. You train the model, evaluate its performance on held-out data, tune hyperparameters, and deploy.

Three broad categories cover most use cases:

Supervised learning uses labeled data — input-output pairs where humans have annotated the correct answers. Email spam classification, house price prediction, and medical diagnosis all use supervised learning. The model learns to map inputs to outputs by generalizing from labeled examples.

Unsupervised learning finds structure in unlabeled data. It clusters similar customers, detects anomalies in network traffic, or reduces the dimensionality of complex data. You don’t tell it what the right answer is; it discovers patterns you didn’t know existed.

Reinforcement learning learns through trial and error, receiving rewards or penalties based on its actions. This powers game-playing systems, robotics, and recommendation engines. The agent discovers strategies through experimentation.

The model itself is a mathematical function with adjustable parameters. During training, the algorithm tweaks these parameters to minimize the difference between its predictions and the actual labels in your training data. Once trained, the model makes predictions on new, never-before-seen data.

When Traditional Software Beats Machine Learning

Here’s where I want to push back on the conventional narrative. Machine learning isn’t always the answer. Sometimes traditional software isn’t just adequate — it’s superior.

Interpretability matters more than accuracy in your domain. If you’re in healthcare or finance, you often need to explain why a decision was made. A traditional rule-based system can show exactly which condition triggered a denial. A neural network making the same decision offers no such clarity. This isn’t just bureaucratic box-checking — it’s a legal and ethical requirement in many contexts.

Your data situation might not support machine learning. ML models need substantial training data, often more than organizations realize. If you have 50 examples of a rare event, no amount of clever algorithms will build a reliable model. Traditional software with explicit rules handles sparse-data scenarios better.

The cost-benefit calculation often favors simpler solutions. Building and maintaining ML systems requires specialized talent, computational resources, and ongoing monitoring for model drift. If a straightforward rule-based system solves your problem, adding ML complexity introduces overhead without proportional benefit.

Deterministic behavior is sometimes non-negotiable. When precision matters absolutely — flight control systems, mathematical computation, transaction processing — you want deterministic, explainable behavior. The statistical nature of ML predictions, with their confidence intervals and probability distributions, introduces uncertainty you may not want.

I’m not saying ML is overrated. I’m saying the pendulum has swung so far toward “everything should be ML” that people forget when simple deterministic code wins. The best engineers choose based on the problem, not the trend.

When Machine Learning Outperforms Traditional Software

Despite the caveats above, machine learning genuinely excels in scenarios where traditional approaches hit walls.

Complex pattern recognition. Identifying objects in images, transcribing speech, translating languages — these tasks resist explicit rule definition. A child recognizes a cat effortlessly, but describing the pixel patterns that constitute “cat” in algorithmic terms proved impossibly hard until ML showed the way. The same applies to fraud detection, where criminals constantly evolve their tactics and static rules get bypassed.

Scaling to massive data. Traditional software struggles when processing billions of transactions to find subtle patterns. Machine learning models scale horizontally, finding correlations across datasets no human could analyze. Amazon’s product recommendations, Netflix’s content suggestions — these leverage patterns across hundreds of millions of users, something rules-based systems couldn’t approach.

Adapting to changing distributions. When the underlying data changes — customer behavior shifts, attackers change tactics, language evolves — traditional software requires manual updates. ML models can retrain on new data, automatically adjusting to distribution shifts. This doesn’t eliminate the need for monitoring, but it shifts maintenance from code changes to data pipeline updates.

Personalization at individual level. Traditional software applies the same rules to everyone. ML enables experiences that adapt to individual preferences, usage patterns, and context. This is why streaming services, social media feeds, and smart assistants feel increasingly tailored — they learn from your behavior specifically.

The pattern is clear: when the problem involves finding patterns in data that humans can’t explicitly articulate, ML wins. When the problem involves deterministic logic that humans can specify, traditional software wins.

A Practical Decision Framework

When you’re deciding between approaches, answer these questions in order:

-

Can you enumerate the rules explicitly? If yes, start with traditional software. Save ML for when rules prove too complex or numerous.

-

Do you have labeled data at scale? ML needs thousands or millions of examples. If you’re starting from scratch with no data infrastructure, factor that cost in.

-

Does interpretability matter more than accuracy? Regulated industries, high-stakes decisions, and debugging requirements often favor explicable rules over marginally higher ML accuracy.

-

How dynamic is the problem? If rules change weekly, ML’s ability to retrain offers an advantage. If rules are stable for years, traditional software’s maintenance simplicity wins.

-

What are your operational constraints? ML requires monitoring for model degradation, data pipelines for continuous training, and infrastructure for inference. Traditional software needs only a compiler and occasional bug fixes.

Most production systems I’ve seen actually use both — traditional software handles core logic and business rules while ML models provide predictions for specific subproblems. The choice isn’t binary, it’s architectural.

Addressing Common Misconceptions

“Machine learning will replace traditional programming.” It won’t. ML is a tool that works within infrastructure built on traditional software. The models need to be served, monitored, and integrated — all traditional software problems. What changes is what code you write: rule definitions versus training pipelines and model architectures.

“ML doesn’t require coding.” This is a dangerous myth. ML absolutely requires coding — Python, TensorFlow or PyTorch, SQL for data manipulation, shell scripts for automation. You write less business logic and more infrastructure code, but programming remains central.

“ML models learn forever and stay accurate.” Models degrade as data distributions shift. Customer behavior changes, fraud patterns evolve, language shifts. Production ML systems need continuous monitoring and periodic retraining. This is called “model drift,” and ignoring it is how ML projects fail silently.

“More data always improves ML models.” Up to a point, yes. Beyond a certain volume, you hit diminishing returns. Quality and relevance matter more than quantity. A carefully curated dataset of 10,000 examples often beats a noisy dataset of 10 million. Garbage in, garbage out applies more ruthlessly to ML than traditional software, because ML lacks the explicit sanity checks humans embed in rule-based systems.

Looking Forward

The boundary between traditional software and ML continues blurring. Techniques like neural architecture search automate model design. Edge deployment makes ML feasible on devices without cloud connectivity. Hybrid systems combining symbolic reasoning with learned components push toward systems that are both adaptive and interpretable.

What won’t change is the fundamental insight: explicit knowledge versus discovered patterns. Both approaches have permanent places in your toolkit. The engineers who understand when each applies will outperform those who reach for one tool exclusively.

Your job isn’t to pick a winner. It’s to understand each approach well enough to choose correctly.